Build at the command prompt

$ git clone --branch v3.3.0 --depth 1 https://github.com/apache/spark.git

Install Java 8 with asdf.

$ brew install asdf

$ echo -e "\n. $(brew --prefix asdf)/libexec/asdf.sh" >> ${ZDOTDIR:-~}/.zshrc

$ asdf --version

v0.10.2

$ asdf plugin-add java

$ asdf list-all java

$ asdf install java corretto-8.342.07.3

$ asdf global java corretto-8.342.07.3

$ echo ". ~/.asdf/plugins/java/set-java-home.zsh" >> ~/.zprofile

$ java -version

openjdk version "1.8.0_342"

OpenJDK Runtime Environment Corretto-8.342.07.3 (build 1.8.0_342-b07)

OpenJDK 64-Bit Server VM Corretto-8.342.07.3 (build 25.342-b07, mixed mode)

Check the build’s success.

$ export MAVEN_OPTS="-Xss64m -Xmx2g -XX:ReservedCodeCacheSize=1g"

$ ./build/mvn -DskipTests clean package

Build at IntelliJ

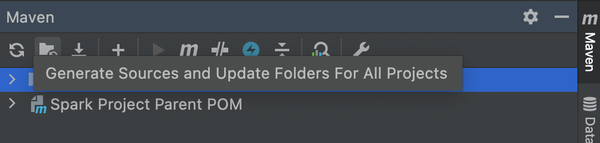

Open codes as Maven Project from “New > Project from Existing Sources.” There is JDK in ~/.asdf/installs/java/, so make hidden directory visible with “Command + Shift + .”, and choose it. After that, run “Generate Sources and Update Folders For All Projects” from Maven window, and then Build Project becomes successful.

Remote debug

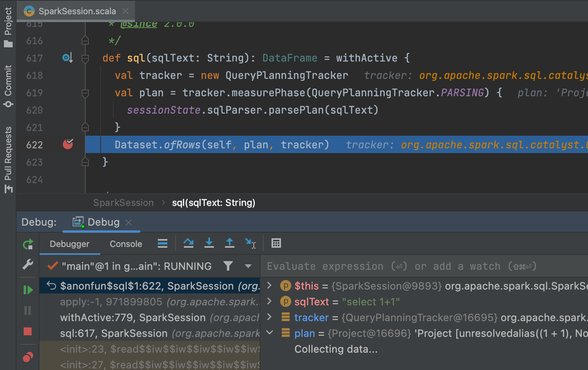

Starting to debug with Listen to remote JVM, and passing following options as spark.driver.extraJavaOptions, breakpoints work.

Debug a Java application running on a remote machine by enabling JDWP - sambaiz-net

$ ./bin/spark-shell --conf "spark.driver.extraJavaOptions=-agentlib:jdwp=transport=dt_socket,server=n,suspend=n,address=localhost:5005"

scala> spark.sql("select 1+1").collect()

In sbt, options can be passed as follows.

$ ./build/sbt

sbt:spark-parent> project core

sbt:spark-core> set javaOptions in Test += "-agentlib:jdwp=transport=dt_socket,server=n,suspend=n,address=localhost:5005"

sbt:spark-core> testOnly *SparkContextSuite -- -t "Only one SparkContext may be active at a time"