Deploy Japanese LLMs in TGI Container with SageMaker's HuggingFaceModel and generate texts

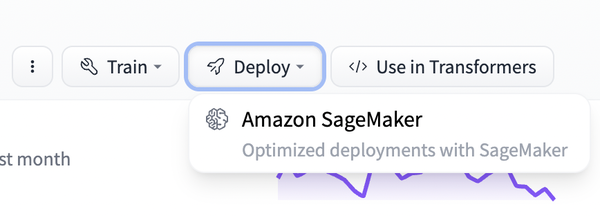

awsmachinelearningRecently, some Japanese LLMs have been released in Hugging Face, such as OpenCALM-7B by CyberAgent and ELYZA-japanese-Llama-2-7b by ELYZA, spun-out from Matsuo Lab., University of Tokyo. SageMaker SDK has a HuggingFaceModel class, which can be used to deploy a model by specifying the model ID. Also, if you press the deploy button in Hugging Face, you can see the minimum code to run the model in SageMaker.

get_huggingface_llm_image_uri() returns the image_uri of HuggingFace Text Generation Inference Containers. This is a DLC (Deep Learning Container) of Text Generation Inference (TGI), which is an OSS by Hugging Face that performs parallel processing on multiple GPUs to generate text quickly. ml.g5.12xlarge has 4 GPUs, so I set SM_NUM_GPUS to 4, which is referenced by sagemaker-entrypoint.sh in the TGI repository.

If the version of the sagemaker library is old, the deployment could fail, so I updated it.

# !pip install sagemaker==2.182.0

import sagemaker

from sagemaker.huggingface import HuggingFaceModel, get_huggingface_llm_image_uri

hub = {

'HF_MODEL_ID': 'cyberagent/open-calm-7b', # or elyza/ELYZA-japanese-Llama-2-7b

'HF_MODEL_REVISION': '276a5fb67510554e11ef191a2da44c919acccdf5', # or 976887c5891284db204320860bb84b71d598063e

'SM_NUM_GPUS': '4'

}

image_uri = get_huggingface_llm_image_uri("huggingface", version="0.9.3")

print(f"image_uri: {image_uri}") # 763104351884.dkr.ecr.ap-northeast-1.amazonaws.com/huggingface-pytorch-tgi-inference:2.0.1-tgi0.9.3-gpu-py39-cu118-ubuntu20.04

huggingface_model = HuggingFaceModel(

image_uri=image_uri,

env=hub,

role=sagemaker.get_execution_role(), # available if run on SageMaker Studio

)

predictor = huggingface_model.deploy(

initial_instance_count=1,

instance_type="ml.g5.12xlarge",

container_startup_health_check_timeout=300,

)

You can see parameters that can be passed to predict() in TGI’s API Docs or in the code. I passed the following inputs, which includes examples for Few-shot, and the model started to output not only the answer but also the next question, so I specified the stop param.

%time

outputs = predictor.predict({

"inputs": "Q: 世界で1番目に高い山は? A: エベレスト\nQ: 日本で1番目に高い山は? A: 富士山\nQ: 日本で2番目に高い山は? A: ",

"parameters": {

"stop": ["\n"],

"details": True,

},

})

print(outputs)

When I ran this, both models returned the result in 5-6 µs. Btw, the correct answer is 北岳.

// open-calm-7b

[

{

'generated_text': 'Q: 世界で1番目に高い山は? A: エベレスト\nQ: 日本で1番目に高い山は? A: 富士山\nQ: 日本で2番目に高い山は? A: 槍ヶ岳\n',

'details': {

'finish_reason': 'stop_sequence',

'generated_tokens': 3,

'seed': None,

'prefill': [],

'tokens': [

{'id': 19597, 'text': '槍', 'logprob': -0.59814453, 'special': False},

{'id': 25157, 'text': 'ヶ岳', 'logprob': -0.11425781, 'special': False},

{'id': 186, 'text': '\n', 'logprob': -0.19836426, 'special': False}

]

}

}

]

// ELYZA-japanese-Llama-2-7b

[

{

'generated_text': 'Q: 世界で1番目に高い山は? A: エベレスト\nQ: 日本で1番目に高い山は? A: 富士山\nQ: 日本で2番目に高い山は? A: 北岳\n',

'details': {

'finish_reason': 'stop_sequence',

'generated_tokens': 5,

'seed': None,

'prefill': [],

'tokens': [

{'id': 30662, 'text': '北', 'logprob': -1.6376953, 'special': False},

{'id': 232, 'text': '', 'logprob': -0.60302734, 'special': False},

{'id': 181, 'text': '', 'logprob': -0.011375427, 'special': False},

{'id': 182, 'text': '岳', 'logprob': -0.0010786057, 'special': False},

{'id': 13, 'text': '\n', 'logprob': -0.40161133, 'special': False}

]

}

}

]

temperature is a parameter dividing logits that will be passed to softmax function e(x_i)/sum(e(x)), and if it’s less than 1, the difference between the probabilities of tokens will be exponentially wider, and if it’s greater than 1, it will be narrower. This can be used to adjust the diversity of generated sentences.

// open-calm-7b

print(predictor.predict({

"inputs": "今日はとっても楽しかったね。明日は",

"parameters": {

"temperature": 0.1,

}

})) # [{'generated_text': '今日はとっても楽しかったね。明日は、お別れ遠足。お天気が心配だけど、元気に幼稚園に来てね。'}]

print(predictor.predict({

"inputs": "今日はとっても楽しかったね。明日は",

"parameters": {

"temperature": 5,

}

})) # [{'generated_text': '今日はとっても楽しかったね。明日はフレッシュ東京五輪to暖かく酸素軍第たどり着椅濃厚と言え年度が成立料金MMako始まってませたあなたも共催その間に'}]

// ELYZA-japanese-Llama-2-7b

[{'generated_text': '今日はとっても楽しかったね。明日はお休みだから、ゆっくり休んでね。'}]

[{'generated_text': '今日はとっても楽しかったね。明日はoccup episodes and Köm x Linux social Јplaightkehr Национальistent chang rect ils spot Point計'}]

Clean up.

predictor.delete_model()

predictor.delete_endpoint()