When I ran a large application on an EKS cluster, the subnet’s IP addresses ran out.

Reason for IP address exhaustion

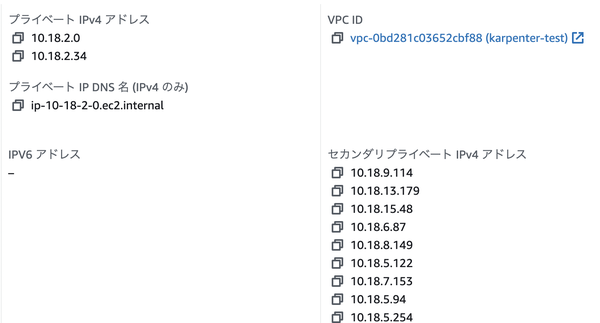

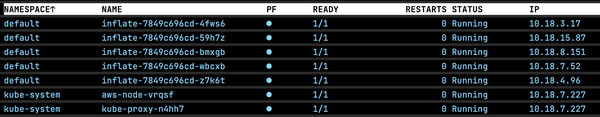

Pod IP addresses are assigned by ipamd (IP Address Management Daemon) of VPC CNI, and these are from the instance’s secondary IP addresses.

The number of secondary IP addresses are controlled with WARM_ENI_TARGET (Default: 1), which is the number of spare ENIs, or WARM_IP_TARGET (Default: None), which is the number of spare IP addresses in VPC CNI settings. By default, when the first Pod runs, the remaining IP address of the ENI is reduced by one, the second ENI is attached, and the maximum IP addresses that can be attached to the ENI are newly retained. The maximum number of ENIs that can be attached to an instance and the maximum number of IP addresses that can be attached to an ENI are depending on the instance type.

This results in holding more IP addresses than necessary, especially when a small number of Pods are running on a node with a large instance type. In this case, you can set WARM_IP_TARGET to hold the minimum number of IP addresses required. When the cluster is large, EC2 API calls may be throttled due to a large number of calls, so it is recommended to set MINIMUM_IP_TARGET as well.

$ kubectl set env ds aws-node -n kube-system WARM_IP_TARGET=2

It can also be changed with CDK.

new eks.KubernetesPatch(this, 'LimitWarmIPAddr', {

cluster,

resourceName: 'daemonset/aws-node',

resourceNamespace: 'kube-system',

applyPatch: {

spec: {

template: {

spec: {

containers: [{

name: 'aws-node',

env: [{

name: 'WARM_IP_TARGET',

value: '2',

}]

}],

},

},

},

},

restorePatch: {},

})

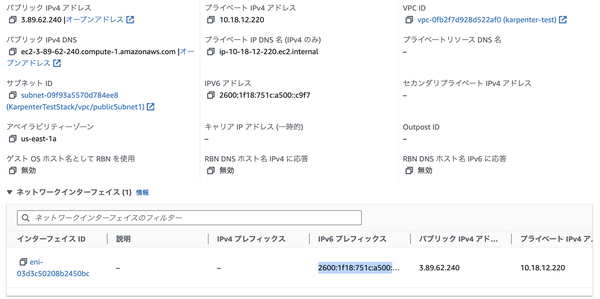

In this example, 5 Pods are running on a node with 7 IP addresses.

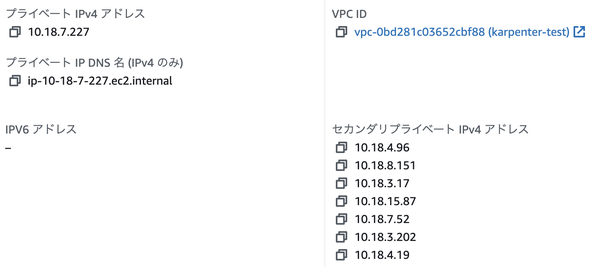

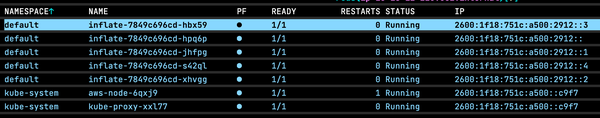

migration to IPv6

However, when a large number of Pods are running, there is no fundamental solution other than expanding CIDR blocks of subnets to solve IP addresses exhaustion. In such a case, you can assign IPv6 addresses to Pods by setting the ipFamily of the cluster to IPv6. This setting is available only when the cluster is created. Also, IPv6 blocks must be assigned to the subnet, and it is not supported on older instance types that are not Nitro-based.

new eks.Cluster(this, 'cluster', {

...

ipFamily: eks.IpFamily.IP_V6

})

The IPv6 addresses are assigned from the IPv6 prefix. If the IPv6 prefix is not set or the node doesn’t have the ec2:AssignIpv6Addresses permission, you will see “failed to assign an IP address to container” error. By the way, if you set ENABLE_PREFIX_DELEGATION=true even for IPv4, the addresses are assigned from the prefix, so you can run more Pods on one node.

nodeRole.attachInlinePolicy(new iam.Policy(scope, 'IPV6Policy', {

statements: [

new iam.PolicyStatement({

actions: ["ec2:AssignIpv6Addresses", "ec2:UnassignIpv6Addresses"],

resources: ["arn:aws:ec2:*:*:network-interface/*"],

}),

],

}))

Pods communicate with IPv6, and in order to communicate with external IPv4 endpoints, host-local IPv4 addresses are also assigned and it is NAT to the host ENI IPv4 address.

If capacity is not a managed node group, you need to pass bootstrap.sh parameter or pods aren’t assigned IPv6 addresses.

Call AWS API with AwsCustomResource in CDK - sambaiz-net

const clusterInfo = new custom_resources.AwsCustomResource(this, 'EksDescribeCluster', {

onUpdate: {

service: 'eks',

action: 'describeCluster',

parameters: {

name: cluster.clusterName,

},

physicalResourceId: custom_resources.PhysicalResourceId.fromResponse("cluster.arn")

},

policy: custom_resources.AwsCustomResourcePolicy.fromSdkCalls({

resources: [cluster.clusterArn]

}),

})

cluster.addAutoScalingGroupCapacity('EKSClusterDefaultCapacity', {

...

bootstrapOptions: {

additionalArgs: `--ip-family ipv6 --service-ipv6-cidr ${clusterInfo.getResponseField("cluster.kubernetesNetworkConfig.serviceIpv6Cidr")}`,

},

})