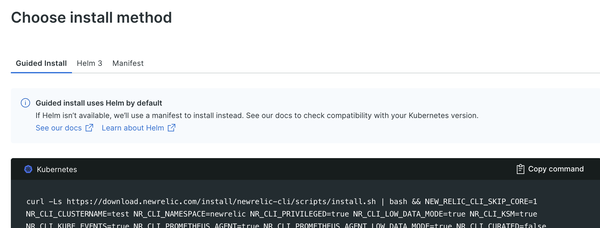

NewRelic Kubernetes Integration has various components, and nri-bundle Helm Chart bundles them. Proceeding with Guided install, the parameters to pass are generated, so I copied them to CDK.

Looking at the chart, credentials can be passed as strings as it is or Secrets, so I imported them from SecretsManager with External Secrets.

const newrelicNamespace = cluster.addManifest('newrelic-namespace', {

apiVersion: 'v1',

kind: 'Namespace',

metadata: {

name: 'newrelic'

}

})

const secretName = 'newrelic-bundle-secret'

const newrelicBundleSecret = cluster.addManifest('NewrelicBundleSecret', {

apiVersion: 'external-secrets.io/v1beta1',

kind: 'ExternalSecret',

metadata: {

name: secretName,

namespace: 'newrelic'

},

spec: {

refreshInterval: '1h',

secretStoreRef: {

name: 'secretsmanager',

kind: 'ClusterSecretStore'

},

data: [{

secretKey: 'newrelic-license-key',

remoteRef: {

key: secretName,

property: 'newrelic-license-key'

}

}, {

secretKey: 'pixie-api-key',

remoteRef: {

key: secretName,

property: 'pixie-api-key'

}

}, {

// key of the deploy key is "deploy-key".

// https://github.com/pixie-io/pixie/blob/release/vizier/v0.14.8/k8s/operator/helm/values.yaml#L37

secretKey: 'deploy-key',

remoteRef: {

key: secretName,

property: 'pixie-deploy-key'

}

}]

}

})

newrelicBundleSecret.node.addDependency(newrelicNamespace)

newrelicBundleSecret.node.addDependency(secretStore)

Currently, Bundled pixie version is old, and it causes error when running on ARM instances. PR is already opened.

const newrelicBundle = cluster.addHelmChart('newrelic-bundle', {

chart: 'nri-bundle',

release: 'newrelic-bundle',

repository: 'https://helm-charts.newrelic.com',

namespace: 'newrelic',

createNamespace: false,

values: {

global: {

customSecretName: secretName,

customSecretLicenseKey: 'newrelic-license-key',

cluster: cluster.clusterName,

lowDataMode: true,

},

"newrelic-infrastructure": {

privileged: true,

},

"kube-state-metrics": {

enabled: true,

image: {

tag: "v2.10.0"

}

},

"kubeEvents": {

enabled: true,

},

"newrelic-prometheus-agent": {

enabled: true,

lowDataMode: true,

config: {

kubernetes: {

integrations_filter: {

enabled: true,

}

}

},

},

"newrelic-logging": {

enabled: true,

lowDataMode: true,

fluentBit: {

// excluding pods by adding the annotation fluentbit.io/exclude: "true"

k8sLoggingExclude: true

}

},

"newrelic-pixie": {

enabled: true,

customSecretApiKeyName: secretName,

customSecretApiKeyKey: "pixie-api-key"

},

"pixie-chart": {

enabled: true,

customDeployKeySecret: secretName,

clusterName: cluster.clusterName,

}

},

wait: false

})

newrelicBundle.node.addDependency(newrelicBundleSecret)

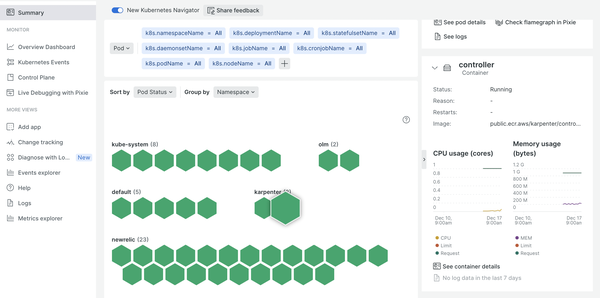

If it is installed, you can see metrics and logs at container and node level, and also events.

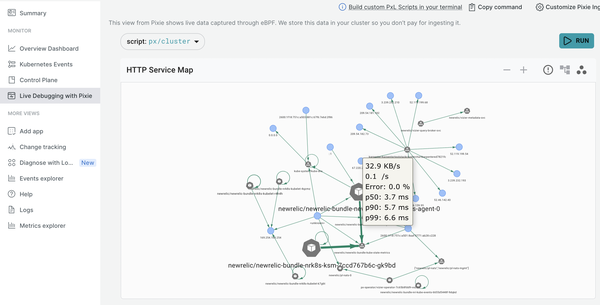

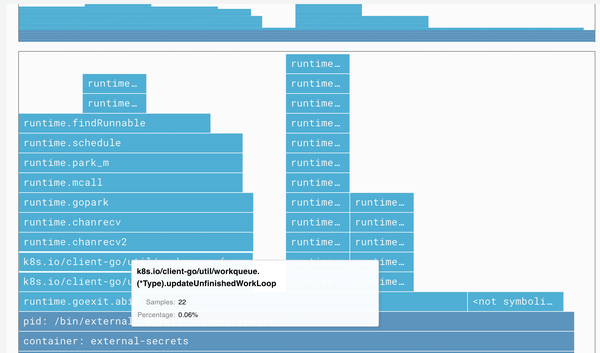

Pixie collects data with eBPF programs, which run in a sandbox in the Linux kernel and are triggered by network events etc., and it visualizes service map, traffic,

and application’s flame graph.

Get communication in Kubernetes cluster with Pixie’s PxL script - sambaiz-net

newrelic-prometheus-agent is a component that runs Prometheus with Agent mode to do remote_write Exporter’s metrics to New Relic. Queries, alerts, and recording rules are unavailable.

$ kubectl port-forward -n newrelic $(kubectl get pod -n newrelic -l app.kubernetes.io/name=newrelic-prometheus-agent -o jsonpath="{.items[0].metadata.name}") 9090:9090

Forwarding from 127.0.0.1:9090 -> 9090

Forwarding from [::1]:9090 -> 9090

$ curl -G 'http://localhost:9090/api/v1/query' --data-urlencode 'query=up' | jq

{

"status": "error",

"errorType": "execution",

"error": "unavailable with Prometheus Agent"

}

Service discovery for Exporters is performed with calling Kubernetes API to get resources corresponding to kubernetes_sd_configs in scrape_configs. Compare separator joined source_labels string with regex, and if action is keep, those that do not match are dropped, and if action is replace, set the replacement value to target_label. In other words, if you add newrelic.io/scrape: true annotation to a Pod, Prometheus metrics returned by /metrics will be sent.

# /etc/prometheus/config/config.yaml

...

scrape_configs:

...

- job_name: newrelic-pod

honor_timestamps: true

scrape_interval: 30s

scrape_timeout: 10s

metrics_path: /metrics

scheme: http

follow_redirects: true

enable_http2: true

relabel_configs:

- source_labels: [__meta_kubernetes_pod_annotation_newrelic_io_scrape]

separator: ;

regex: "true"

replacement: $1

action: keep

- source_labels: [__meta_kubernetes_pod_phase]

separator: ;

regex: Pending|Succeeded|Failed|Completed

replacement: $1

action: drop

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scheme]

separator: ;

regex: (https?)

target_label: __scheme__

replacement: $1

action: replace

...

kubernetes_sd_configs:

- role: pod

kubeconfig_file: ""

follow_redirects: true

enable_http2: true

...

remote_write:

- url: https://metric-api.newrelic.com/prometheus/v1/write?collector_name=prometheus-agent&collector_version=1.11.0&prometheus_server=newrelic-bundle-newrelic-prometheus-agent-0

remote_timeout: 30s

write_relabel_configs:

- source_labels: [__name__]

separator: ;

regex: kube_.+|container_.+|machine_.+|cadvisor_.+

replacement: $1

action: drop

- source_labels: [__name__]

separator: ;

regex: timeseries_write_(.*)

target_label: newrelic_metric_type

replacement: counter

action: replace

- source_labels: [__name__]

separator: ;

regex: sql_byte(.*)

target_label: newrelic_metric_type

replacement: counter

action: replace

name: newrelic_rw

authorization:

type: Bearer

credentials: <secret>

...

The sent metrics can be referred as the same name fields in FROM Metric. However, Prometheus’ Counter returns a cumulative sum, whereas New Relic’s Count is a delta value, so it is converted.

/* container_memory_usage_bytes{id="/"} */

FROM Metric SELECT latest(container_memory_usage_bytes) WHERE id = '/' TIMESERIES