Install Livy on EMR on EKS and run Spark jobs from local Jupyter notebooks with Sparkmagic

awskubernetessparketlApache Livy provides a REST API for interacting with Spark clusters, and Sparkmagic calls this API to run jobs on remote Spark clusters from Jupyter Notebooks. It is also used by Athena for Apache Spark, and being able to run jobs interactively and check the results is useful for debugging and running ad-hoc queries.

Athena for Apache Spark の Notebook で DataFrame.toPandas().plot() した際の日本語が文字化けしないようにする - sambaiz-net

However, although PR for Livy’s Kubernetes support was created in 2019, there is no maintainer who knows Kubernetes well, and the situation continues to be that it is not merged. So I thought about building it myself, but surprisingly, EMR 7.1 supports Livy. I try running Spark job on EMR on EKS from local Jupyter Notebooks using this.

Install Livy with Helm chart by passing parameters.

const livyExecutionRole = new iam.Role(this, 'LivyExecutionRole', {

roleName: 'livy-execution-role',

assumedBy: new iam.WebIdentityPrincipal(

cluster.openIdConnectProvider.openIdConnectProviderArn,

{

StringLike: new cdk.CfnJson(this, 'LivyExecutionRoleStringEquals', {

value: {

[`${cluster.clusterOpenIdConnectIssuer}:sub`]: 'system:serviceaccount:livy:emr-containers-sa-livy',

},

}),

}

),

inlinePolicies: {

UploadS3: new iam.PolicyDocument({

statements: [

new iam.PolicyStatement({

effect: iam.Effect.ALLOW,

actions: [

's3:PutObject',

's3:ListBucket',

],

resources: [

'arn:aws:s3:::<bucket>',

'arn:aws:s3:::<bucket>/*'

],

}),

],

}),

},

})

cluster.addHelmChart('LivyChart', {

chart: 'livy',

repository: 'oci://059004520145.dkr.ecr.ap-northeast-1.amazonaws.com/livy',

version: '7.1.0',

namespace: 'livy',

createNamespace: true,

values: {

image: '059004520145.dkr.ecr.ap-northeast-1.amazonaws.com/livy/emr-7.1.0:latest',

sparkNamespace: 'emr',

serviceAccount: {

executionRoleArn: livyExecutionRole.roleArn,

},

service: {

annotations: {

'service.beta.kubernetes.io/aws-load-balancer-subnets': 'testpublicsubnet1,testpublicsubnet2'

},

},

},

})

Load Balancer Controller creates an internal NLB corresponding to the service, but you can also do port forwarding to access it from local environment.

Install AWS Load Balancer Controller on EKS cluster and set up ALB Ingress - sambaiz-net

$ kubectl port-forward -n livy svc/emr-containers-livy 8998:8998

containers-livy 8998:8998

Forwarding from 127.0.0.1:8998 -> 8998

Forwarding from [::1]:8998 -> 8998

$ curl http://localhost:8998/sessions

{"from":0,"total":0,"sessions":[]}

Call Livy’s REST API to run a Spark job - sambaiz-net

It seems that there are no official images for Sparkmagic, so I built it using the Dockerfile in the repository as a reference. There is a way to use %%spark magic to perform processing on a Spark cluster, but a kernel that automatically do it is also provided, so install it if needed.

FROM jupyter/base-notebook

USER root

RUN apt update && \

apt install -y gcc && \

apt clean && \

rm -rf /var/lib/apt/lists/*

# This is needed because requests-kerberos fails to install on debian due to missing linux headers

RUN conda install requests-kerberos -y

USER $NB_USER

RUN pip install --upgrade pip && \

pip install --upgrade --ignore-installed setuptools && \

pip install hdijupyterutils autovizwidget sparkmagic

# Jupyter extensions changed in >7.x.x

# For now (workaround), let's pin to 6 to avoid breaking things

# xref: https://github.com/jupyter-incubator/sparkmagic/issues/825

RUN pip install notebook==6.5.5

RUN mkdir /home/$NB_USER/.sparkmagic

COPY config.json /home/$NB_USER/.sparkmagic/config.json

RUN jupyter nbextension enable --py --sys-prefix widgetsnbextension

RUN jupyter-kernelspec install --user $(pip show sparkmagic | grep Location | cut -d" " -f2)/sparkmagic/kernels/sparkkernel

RUN jupyter-kernelspec install --user $(pip show sparkmagic | grep Location | cut -d" " -f2)/sparkmagic/kernels/pysparkkernel

RUN jupyter-kernelspec install --user $(pip show sparkmagic | grep Location | cut -d" " -f2)/sparkmagic/kernels/sparkrkernel

RUN jupyter serverextension enable --py sparkmagic

USER root

RUN chown $NB_USER /home/$NB_USER/.sparkmagic/config.json

CMD [ "start-notebook.sh", "--NotebookApp.token=''" ]

config.json is as follows.

{

"kernel_scala_credentials" : {

"url": "http://host.docker.internal:8998"

},

"session_configs": {

"driverMemory": "1000M",

"executorCores": 2

},

"session_configs_defaults": {

"conf": {

"spark.kubernetes.namespace": "emr",

"spark.kubernetes.container.image": "public.ecr.aws/emr-on-eks/spark/emr-7.1.0:latest",

"spark.kubernetes.authenticate.driver.serviceAccountName": "emr-containers-sa-spark-driver-*****",

"spark.kubernetes.file.upload.path": "s3://<bucket>",

"spark.hadoop.hive.metastore.client.factory.class": "com.amazonaws.glue.catalog.metastore.AWSGlueDataCatalogHiveClientFactory",

"spark.sql.catalog.spark_catalog.type": "hive"

}

},

"livy_session_startup_timeout_seconds": 180,

"max_results_sql": 2500

}

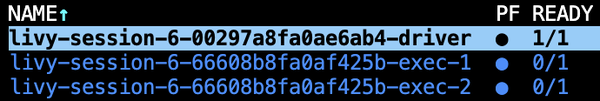

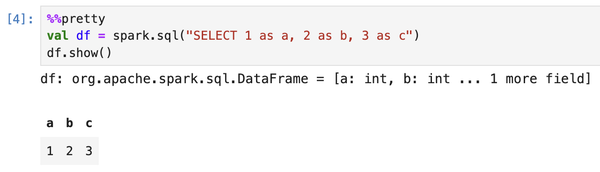

If you execute something like spark.sql() on a notebook, a session is started on the EKS cluster and the results are returned.