Avoiding OOM in count-distinct operations on massive datasets using HyperLogLog++, a probabilistic cardinality estimation algorithm

sparkpapergcpWhen counting unique elements, such as the number of users in access logs, we often execute queries like “count(distinct column_name)”. For massive datasets, regular count() operations can be scaled by splitting the data and summing the results. However, this method doesn’t work with distinct operations, which can lead to extreme slowdowns due to memory shortages or, in the worst case, failure due to OOM errors.

This issue is known as the Count-distinct problem (cardinality estimation problem), and several algorithms have been proposed to estimate cardinality (unique count) without retaining all elements. One of them is HyperLogLog, and its improved version, HyperLogLog++, is widely implemented as approx_count_distinct() in Spark and approx_distinct() in Presto.

spark.sql("""

select count(distinct id)

from test_data

""").show() // 100,818

spark.sql("""

select approx_count_distinct(id)

from test_data

""").show() // 100,598

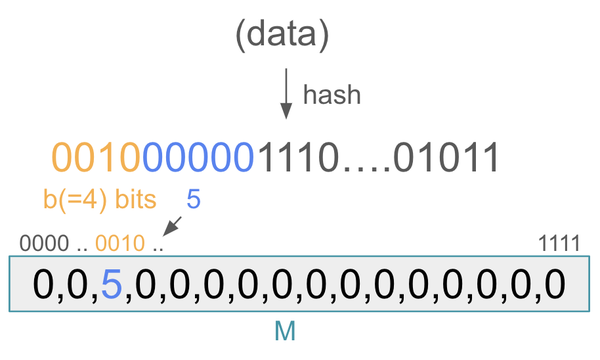

HyperLogLog passes each data to a hash function, distributes them into \(m = 2^b\) registers based on the first \(b\) bits, and for each register, finds the maximum length of the leading zeros in the remaining bits, denoted as \(M[i]\ (1 \leqq i \leqq m)\).

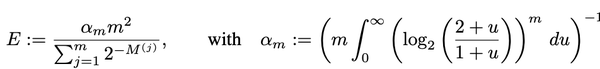

It then estimates the cardinality \(E\) using the following formula, where \(\alpha\) is a correction factor depending on only \(m\).

When \(b=2\ (m = 4)\) and the maximum number of leading zeros for each register is [1, 2, 3, 2], the denominator of E becomes \((\frac{1}{2} + \frac{1}{4} + \frac{1}{8} + \frac{1}{4})\). Multiplying this by m gives the harmonic mean of \(2^{M[j]}\). As the number of elements increases, leading zeros tend to be longer, increasing this value and consequently \(E\).

Increasing \(m\) improves accuracy but also increases memory usage, creating a trade-off. Surprisingly even with \(m = 2048\), the standard error remains around 2.3% regardless of cardinality. However, when cardinality is too low relative to the number of registers, accuracy decreases. HyperLogLog++ addresses this issue by using sparse representation in such cases.

A useful property is that merging registers can yield the result of a union data, which also contributes to the ease of parallel execution. In addition to APPROX_COUNT_DISTINCT(), BigQuery has HLL_COUNT.INIT()/MERGE() functions, which allow you to save registers on aggregation, and to calculate cardinality later by grouping.

SELECT HLL_COUNT.MERGE(hll_sketch) AS distinct_customers_with_open_invoice -- => 3

FROM

(

SELECT

country,

HLL_COUNT.INIT(customer_id) AS hll_sketch

FROM

UNNEST(

ARRAY<STRUCT<country STRING, customer_id STRING, invoice_id STRING>>[

('UA', 'customer_id_1', 'invoice_id_11'),

('BR', 'customer_id_3', 'invoice_id_31'),

('CZ', 'customer_id_2', 'invoice_id_22'),

('CZ', 'customer_id_2', 'invoice_id_23'),

('BR', 'customer_id_3', 'invoice_id_31'),

('UA', 'customer_id_2', 'invoice_id_24')])

GROUP BY country

);