Spark Web UI is a tool for monitoring Jobs and Executors.

Get Dockerfile that runs Spark based on maven:3.6-amazoncorretto-8 from aws-glue-samples, and start History Server with the path of EventLog output by Glue and authentication information to get it.

$ git clone https://github.com/aws-samples/aws-glue-samples.git

$ cd aws-glue-samples/utilities/Spark_UI/glue-3_0/

$ docker build -t glue/sparkui:latest .

$ docker run -it -e SPARK_HISTORY_OPTS="$SPARK_HISTORY_OPTS -Dspark.history.fs.logDirectory=s3a://path_to_eventlog

-Dspark.hadoop.fs.s3a.access.key=$AWS_ACCESS_KEY_ID -Dspark.hadoop.fs.s3a.secret.key=$AWS_SECRET_ACCESS_KEY"

-p 18080:18080 glue/sparkui:latest "/opt/spark/bin/spark-class org.apache.spark.deploy.history.HistoryServer"

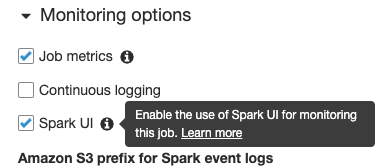

Now, you can access http://localhost:18080 and select Application. The timeline of executing Jobs and adding Executors is displayed.

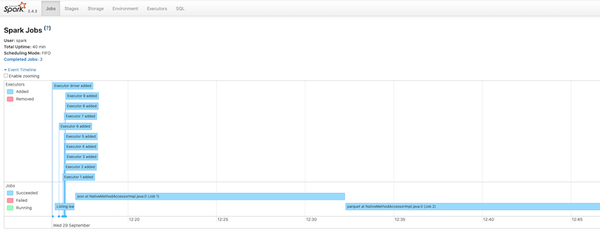

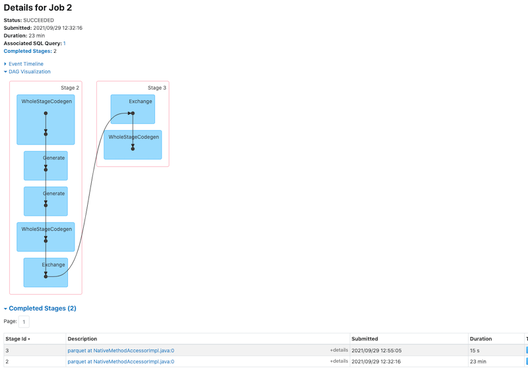

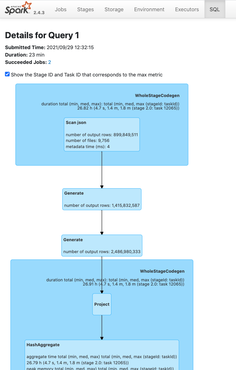

When you select Job, Stage and DAG (Directed Acyclic Graph) are displayed as shown below. WholeStageCodeGen is a process to generate code in Stage units instead of doing it in each process for acceleration. However, if the generated code is large, the JVM’s JIT compiler does not natively compile and executes it with the interpreter, so it may actually slow down.

The number of Stages is the number that requires a costly shuffle, so the smaller, the better. If it is big due to joins, consider adjusting parameters to do Broadcast Hash Join that doesn’t need to shuffle instead.

What is Apache Spark, RDD, DataFrame, DataSet, Action and Transformation - sambaiz-net

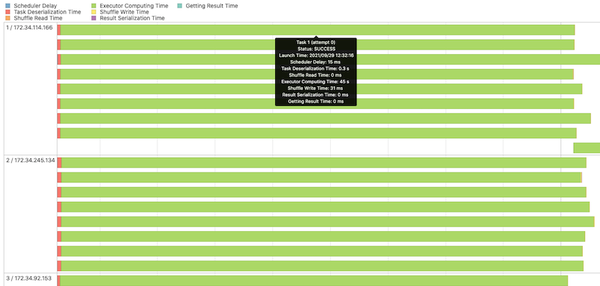

When you select Stage, the Task timeline of each Executor is displayed. Most of the time is spent for computing, and the Task execution time and input records of each Executor are also almost uniform, so appear to be well distributed.

At the SQL tab, you can see the Logical plan of optimized queries, Physical plan that is actual executed, and how long each process takes.

References

Apache Sparkコミッターが教える、Spark SQLの詳しい仕組みとパフォーマンスチューニング Part2 - ログミーTech