Creating Iceberg Tables in S3 Tables from EMR Serverless, inserting data, and querying from Athena

awssparkicebergS3 Tables is a storage specialized for Iceberg tables that was announced at re:Invent 2024. S3 Metadata, which was announced at the same time and enables to query object metadata such as last modified date, also writes them to S3 Tables.

The price in the Tokyo region is 0.0288 USD/GB (up to 50TB/month), which is about 10% higher than the standard S3 rate of USD 0.025/GB. In addition to this, there is a monitoring cost for the number of objects, and since there are currently no storage classes, it isn’t possible to apply lifecycle to change old data to a cheaper tier, and Intelligent Tiering, which automatically changes the tier of objects that aren’t recently accessed. Besides, it should be noted that lifecycle transition requests incur cost about $0.01/1000 objects, and Intelligent-Tiering monitoring incurs cost about $0.0025/1000 objects.

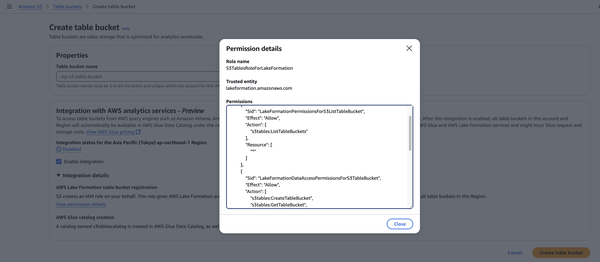

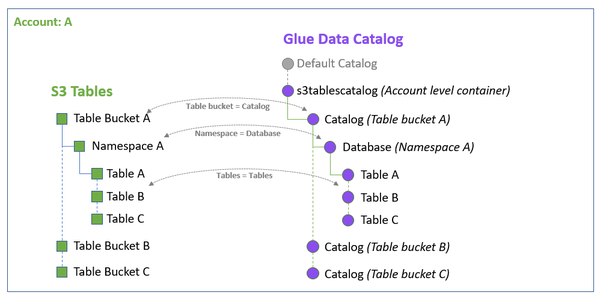

Create a Table bucket. When you enable integration with analytics services like Athena, a role for Lake Formation and a catalog named s3tablescatalog are created, allowing you to query from these services.

When tables are created in S3 Tables, they are automatically reflected in the catalog.

Since the latest version of EMR Serverless emr-7.7.0 doesn’t include the S3Tables implementation, I pass it using spark.jars. Note that with emr-6.15.0, which is currently selected by default when creating a Studio, NoSuchFieldError in AWS SDK occurred. Additionally, network configuration is required to call the S3 Tables API.

Running Spark MLlib on EMR Serverless from EMR Studio’s Jupyter Notebook - sambaiz-net

$ cat build.gradle

plugins {

id 'java'

}

repositories {

mavenCentral()

}

dependencies {

implementation 'software.amazon.awssdk:s3tables:2.29.26'

implementation 'software.amazon.s3tables:s3-tables-catalog-for-iceberg:0.1.5'

}

task copyDependencies(type: Copy) {

from configurations.runtimeClasspath

into "$buildDir/dependencyJars"

}

$ gradle copyDependencies

$ aws s3 cp --recursive build/dependencyJars s3://(general_purpose_bucket)/jars/

Specify S3TablesCatalog for catalog-impl and Table bucket for warehouse.

%%configure -f

{

"conf": {

"spark.jars": "s3a://(general_purpose_bucket)/jars/*",

"spark.sql.defaultCatalog": "s3tables_test",

"spark.sql.catalog.s3tables_test": "org.apache.iceberg.spark.SparkCatalog",

"spark.sql.catalog.s3tables_test.catalog-impl": "software.amazon.s3tables.iceberg.S3TablesCatalog",

"spark.sql.catalog.s3tables_test.warehouse": "arn:aws:s3tables:ap-northeast-1:(account_id):bucket/(table_bucket)"

}

}

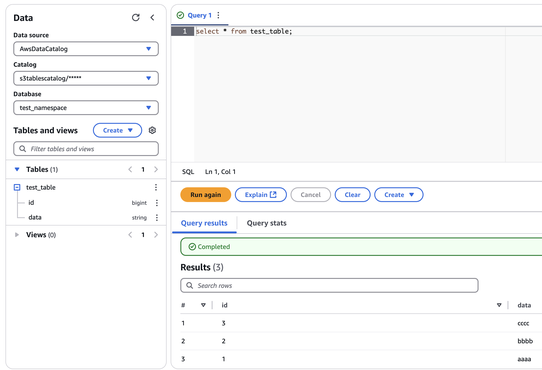

Create a Namespace and Table and insert data.

spark.sql("CREATE NAMESPACE IF NOT EXISTS test_namespace")

spark.sql("CREATE TABLE IF NOT EXISTS test_namespace.test_table (id bigint, data string) USING iceberg")

df = spark.createDataFrame([

(1, "aaaa"),

(2, "bbbb"),

(3, "cccc")

], ["id", "data"])

df.writeTo("test_namespace.test_table").append()

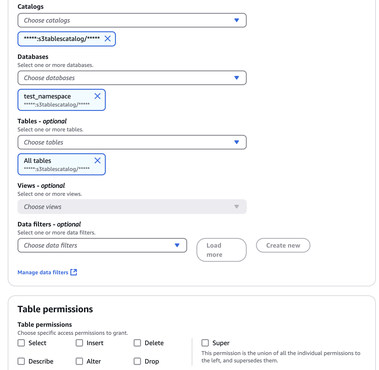

Grant table access permissions to users or roles in Lake Formation. Even if you are an Administrator, you will get an “Insufficient permissions to execute the query” error without proper permissions.

Select the catalog and query from Athena.